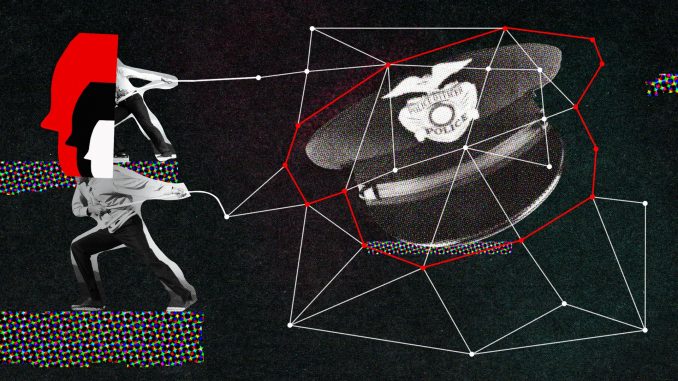

Predictive policing, the use of data-driven algorithms to forecast potential criminal activity, has emerged as a controversial innovation in law enforcement. At first glance, the concept seems promising: by analyzing historical crime data, police departments can allocate resources more efficiently, anticipate trouble spots, and potentially prevent crimes before they occur. However, beneath this veneer of technological progress lies a complex web of ethical concerns that challenge the very foundations of justice, fairness, and civil liberties. As predictive policing gains traction, it becomes increasingly important to scrutinize not just its effectiveness, but its moral implications.

One of the most pressing ethical issues surrounding predictive policing is the risk of reinforcing existing biases. Algorithms are only as objective as the data they are trained on, and in many cases, that data reflects decades of discriminatory policing practices. If certain neighborhoods have historically been over-policed, the data will show higher crime rates in those areas—not necessarily because more crime occurs there, but because more arrests have been made. When predictive models rely on such skewed data, they can perpetuate a cycle of surveillance and enforcement that disproportionately targets marginalized communities. This creates a feedback loop where increased police presence leads to more recorded incidents, which in turn justifies further policing, regardless of actual crime trends.

The ethical principle of justice demands that individuals be treated fairly and equitably under the law. Predictive policing, however, risks undermining this principle by shifting the focus from individual behavior to statistical probabilities. Instead of responding to specific acts of wrongdoing, law enforcement may begin to act on predictions about who might commit a crime or where a crime might occur. This raises serious concerns about due process and the presumption of innocence. People may find themselves under scrutiny not because of their actions, but because of their demographic profile or geographic location. Such practices challenge the notion of individualized suspicion, a cornerstone of ethical policing and legal standards.

Another concern is the potential erosion of privacy and autonomy. Predictive policing often involves the collection and analysis of vast amounts of personal data, including social media activity, location history, and even behavioral patterns. While these data points can enhance predictive accuracy, they also intrude into the private lives of citizens. The ethical question here is whether the benefits of crime prevention justify such invasions of privacy. Moreover, when individuals are aware that their movements and interactions are being monitored, it can have a chilling effect on free expression and association. The balance between public safety and personal freedom becomes increasingly precarious in a world where surveillance is normalized.

Transparency and accountability are also critical ethical dimensions. Predictive policing systems are typically developed by private companies and operate as proprietary technologies, making it difficult for the public to understand how decisions are made. If an algorithm flags a neighborhood as high-risk, what criteria were used? If a person is identified as a potential offender, what data supported that conclusion? Without clear answers, communities are left in the dark, unable to challenge or verify the legitimacy of these tools. Ethical governance requires that such systems be open to scrutiny, with mechanisms in place to audit their performance and address errors or abuses.

The deployment of predictive policing also raises questions about the broader societal impact. When law enforcement becomes increasingly reliant on technology, there is a risk of dehumanizing the process of justice. Officers may begin to trust algorithms more than their own judgment, leading to a loss of empathy and discretion. Communities may feel alienated, perceiving police as agents of a faceless system rather than public servants. This can erode trust and cooperation, which are essential for effective policing. Ethical policing is not just about preventing crime—it’s about building relationships, understanding context, and respecting the dignity of all individuals.

Despite these concerns, proponents argue that predictive policing can be ethically implemented with the right safeguards. For example, algorithms can be designed to minimize bias, and data sets can be curated to exclude discriminatory patterns. Oversight bodies can monitor usage and ensure accountability. Community engagement can help align predictive strategies with local needs and values. These measures are important, but they require a commitment to ethical reflection and continuous improvement. Technology alone cannot solve the complex social issues that underlie crime—it must be guided by principles that prioritize human rights and social justice.

In the end, the ethics of predictive policing are not just about algorithms and data—they are about the kind of society we want to build. Do we value efficiency over fairness? Do we prioritize security at the expense of liberty? These are not easy questions, and they demand thoughtful dialogue among policymakers, technologists, law enforcement, and the public. Predictive policing may offer powerful tools, but its ethical legitimacy depends on how those tools are used. As we navigate this evolving landscape, we must ensure that our pursuit of safety does not come at the cost of our most fundamental values.

Sources: